Where are we in the AI bull market?

The rally in equities and AI stocks off the March lows has been violent and swift. The majority of investors I speak to or whose content I consume are either bullish but locked out of this rally, or cautious and skeptical. I guess this makes me a contrarian, because I am fully invested in my long term portfolio and global macro trading book.

If I was working at a hedge fund, I would be fired for style drift. I fortunately have the freedom to write my own mandate and to go where the best opportunities are. At the moment, the best opportunity is not in rates, FX, or crypto, but in AI. The speed and scale of the AI buildout are overwhelming traditional global macro forces, creating a secular investment cycle that appears less sensitive to the usual zigs and zags of monetary and fiscal policy.

My view is that the AI boom has years to run. The market will eventually enter bubble territory, but I do not think we are there yet: a true bubble is when the story becomes socially ubiquitous, valuations detach from any plausible revenue path, and everyone from your grandmother to the office tea lady is pitching you optical networking stocks. Having traded through the dot-com bubble, I would compare today less to 1999 or early 2000 and more to 1997–1998, well before the Nasdaq peak in March 2000.

There are a number of reasons why I think we are still early:

The inflection from chat-based LLM products to agentic AI only happened 4-5 months ago. The market is only beginning to move from chat-based LLM products to agents that can reason, use tools, write code, search, transact, and execute multi-step workflows. This shift has the potential to drive a 10x step-change in AI spend and 100x in token consumption because agents use far more inference than simple chatbot interactions.

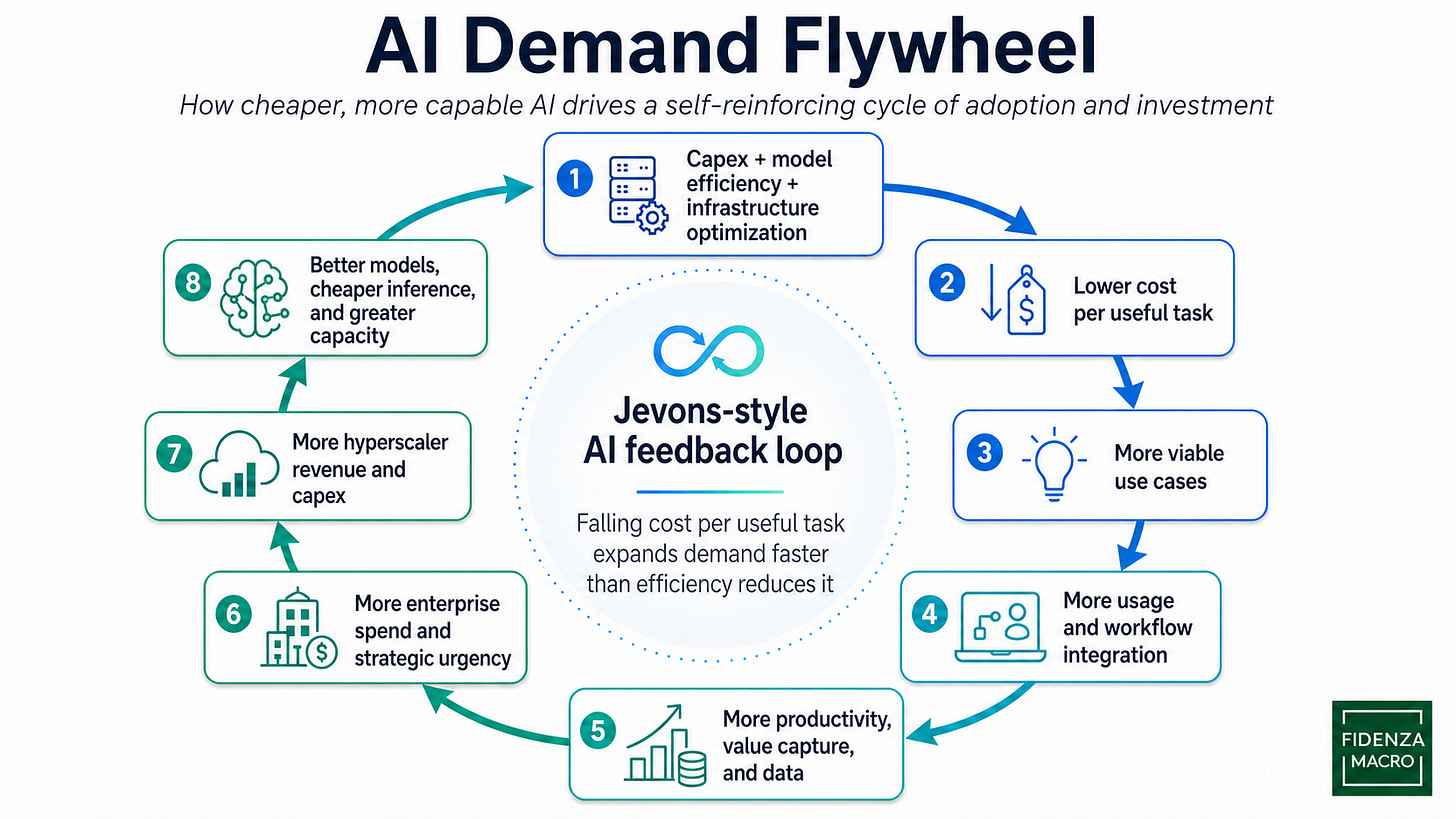

AI is entering a Jevons paradox-driven demand flywheel: hardware capex, model efficiency, networking, and software optimization drive down the cost of useful intelligence → cheaper and more capable inference expands the number of economically viable use cases → broader usage generates more data, workflow integration, revenue, and strategic urgency → this justifies more enterprise adoption and hyperscaler capex → larger scale funds the next wave of chips, data centers, model training, inference infrastructure, and application-layer experimentation.

More inflection points are in the pipeline. AI will increasingly move from the cloud to the edge through smartphones, PCs, wearables, smart glasses, and other personal devices. Physical AI in robotics and automation will add another layer of demand because it requires multimodal training and inference across video, audio, speech, tactile inputs, and real-world control systems.

The AI infrastructure trade is broadening beyond GPUs as the bottlenecks shift toward networking, memory bandwidth, CPU orchestration, advanced packaging, power density, and data-center design. As workloads move from training toward inference, agents, and test-time compute, companies such as Intel and Arm may benefit from increased CPU demand for scheduling, orchestration, memory management, data movement, and coordinating large numbers of model calls. Nvidia’s move from Blackwell to Vera Rubin should also raise bandwidth requirements, expanding the opportunity for networking silicon and optical components. For these emerging AI infrastructure winners, the earnings cycle may still be early as larger clusters, higher inference traffic, and more complex agentic workloads expand their addressable markets.

Enterprise adoption is still underpenetrated. Most companies are still in the experimentation or early rollout phase, using AI for copilots, coding assistance, customer support, document processing, and internal knowledge search rather than fully redesigned workflows. The next leg of the cycle comes when AI moves from optional productivity tool to embedded operating layer across enterprise software, where agents run continuously in the background and convert sporadic human prompts into persistent inference demand.

Where I’m largely invested in is in the bottleneck industries that will determine the speed and scale of the AI boom - primarily energy provision, memory, and networking. The leaders in these sectors have tremendous pricing power and enormous backlogs that will sustain earnings growth for at least the next few years. I’m fully invested in my long term portfolios and have allocated 30-40% of my global macro capital to this portfolio as well.

Paid subscriber section: Current portfolio composition